Technical SEO

to dominate AI and Google search

Know exactly where Googlebot and AI crawlers go on your site and where

they stop. Close the gaps. Get indexed. Get cited.

AI Search

Is Already Here

Make sure your content gets found, crawled and cited.

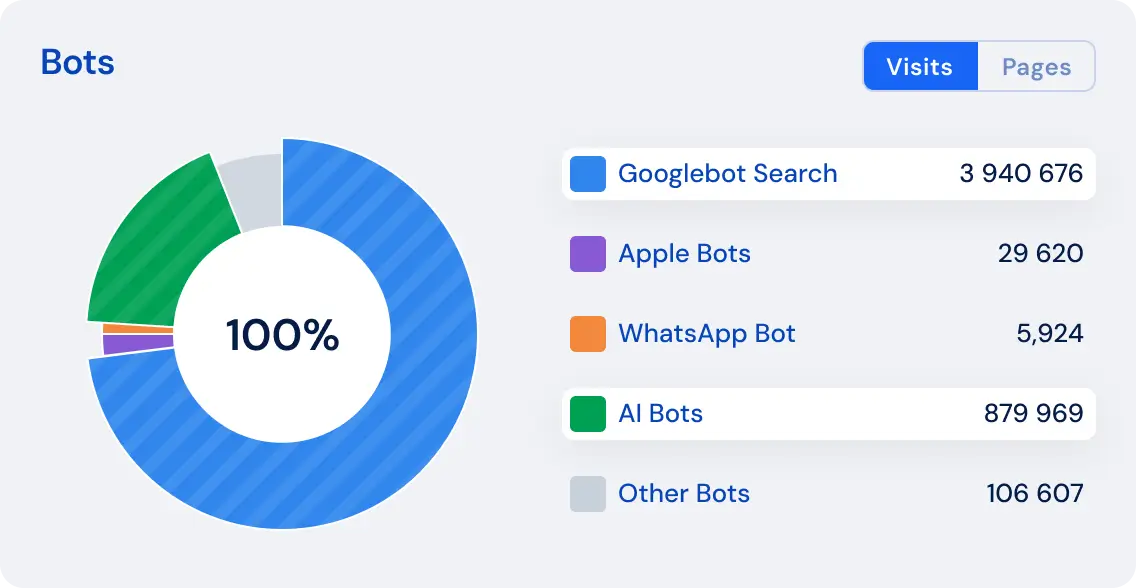

- Track AI Bot Activity — GPTBot, ClaudeBot, PerplexityBot and others are crawling your site. See exactly which pages they visit and how often.

- Compare AI vs Googlebot Behavior — AI crawlers don't behave like Googlebot. Understanding the difference is the new competitive edge.

- Identify What AI Crawlers Can't See — content rendered by JavaScript simply doesn't exist for AI bots. Find the gaps before they cost you citations.

Technical Errors

Don't Just Lower Rankings.

They Kill Revenue.

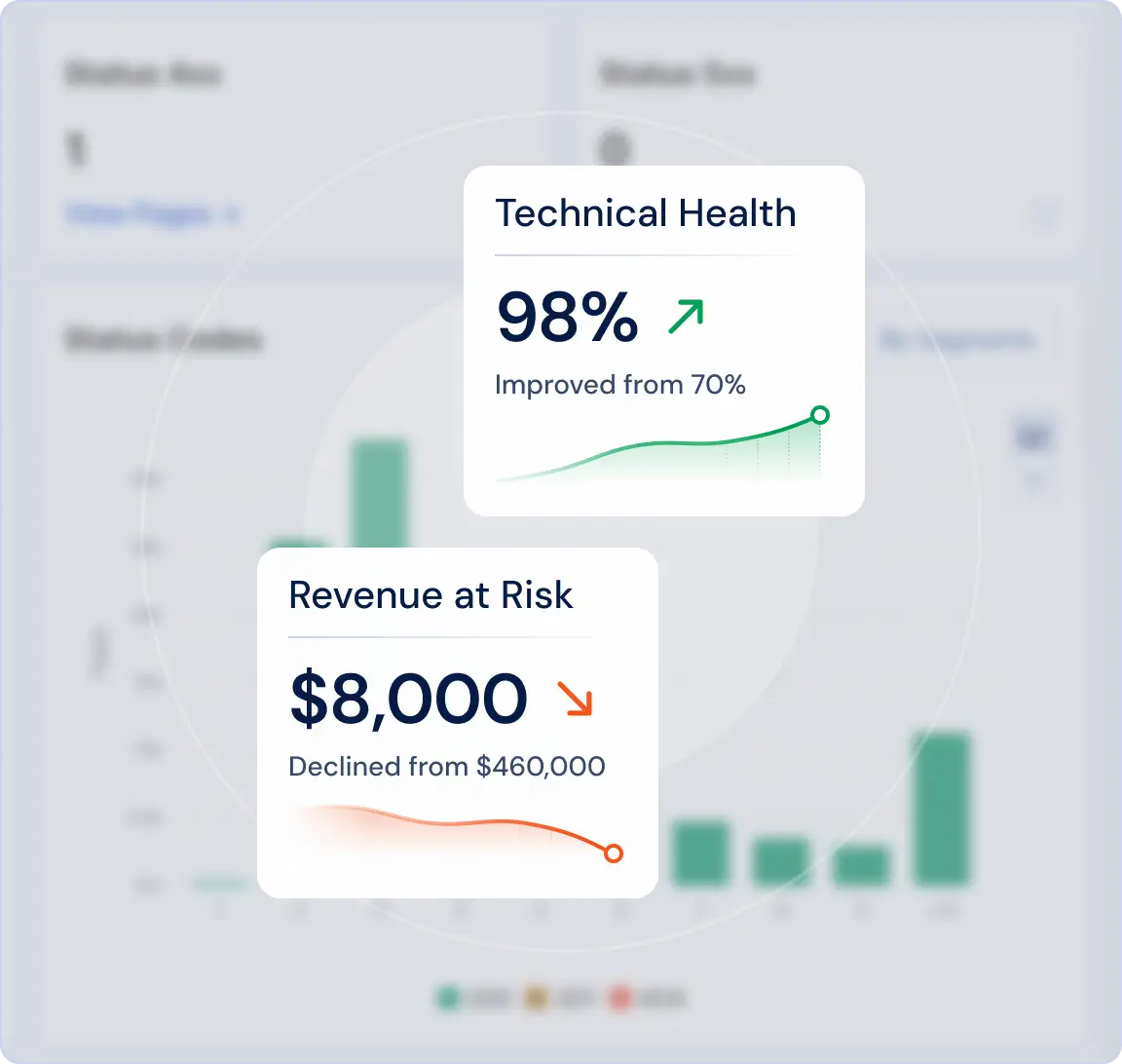

Pages that bots can't crawl won't rank. Content that isn't rendered doesn't exist for search or AI crawlers. In AI search, the stakes are even higher — you're either cited to millions of users or completely invisible.

- Detect tech SEO issues before they affect rankings

- See the revenue impact of every technical issue

- Stay visible in both Google and AI search results

Precise Data Your

Developers Can Act On

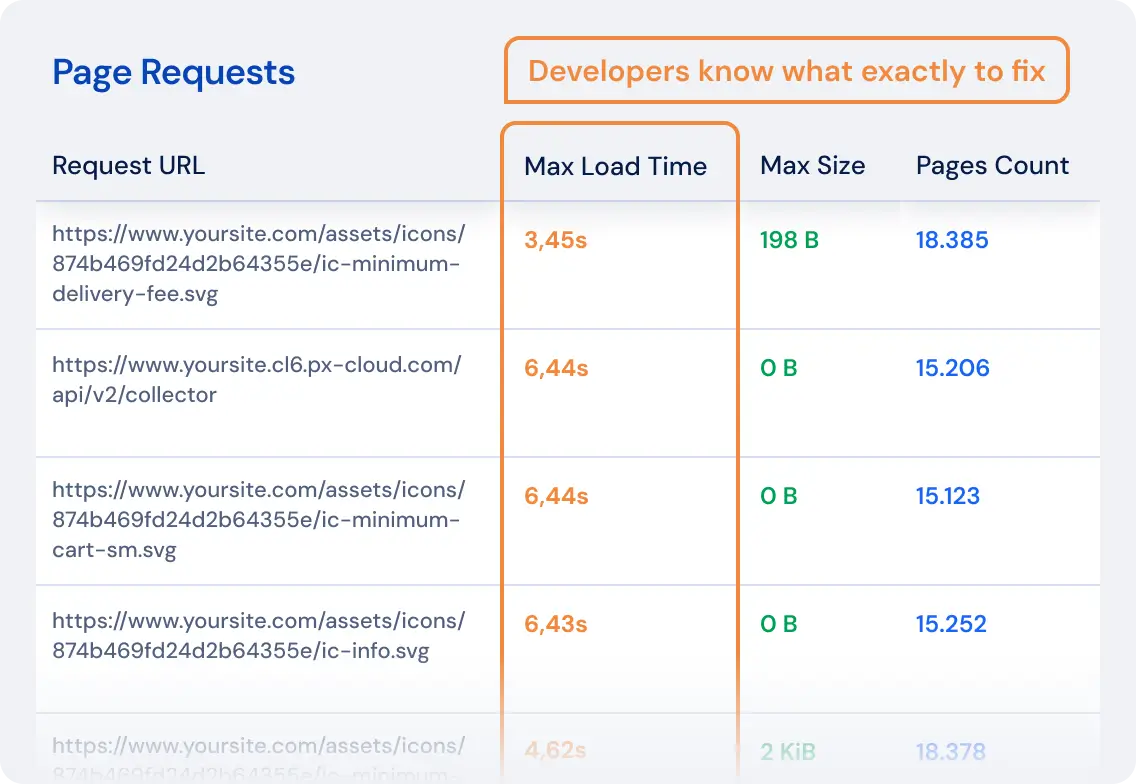

Stop the ticket battles. Give your engineering team precise, log-verified data that shows exactly what to fix and why. No guessing, no back-and-forth communication.

Share live dashboards directly with your developers.

One Platform. Six Modules.

Each module solves a specific visibility problem across Google, AI search and everything in between.

Log Analyzer

See exactly how Google and AI bots navigate your site — what they prioritize, what they ignore and where they waste budget.

ExploreJavaScript Crawler

Make sure search engines and AI crawlers see what your users see. Catch zero-content pages before they cost you traffic.

ExploreGSC Integration

16+ months of full data. Spot cannibalization, zombie pages and ranking decay that GSC alone won't show you.

ExploreGoogle Analytics Insights

See which technical SEO fixes actually moved revenue. Connect page health to organic performance.

Alerts

Get notified before drops happen, not after. Monitor Googlebot, AI bots behavior and Core Web Vitals in real time.

ExploreAI Internal Linker

Find and prioritize link opportunities that boost weaker pages with stronger ones. Improve crawl efficiency by up to 30%.

ExploreSee the Complete Journey — From Crawl to Revenue

Most tools show you fragments. JetOctopus connects Crawl, Logs, GSC and GA4 to reveal the complete lifecycle of every page.

What Exists

Discover every page, its status and technical health.

Who Visits

See which bots crawl — Googlebot, GPTBot, 40+ others.

What Ranks

16+ months of search data, reduced sampling.

What Earns

Connect organic traffic to actual revenue.

Trusted by the Experts

The Enterprise Technical SEO Platform Built for Scale

1M+

pages/day

Audit your entire site in hours, not weeks

100%

full data

No blind spots

Unlimited

projects, users, crawls

All included

What Happens When You Take Technical SEO Seriously.

Real results from JetOctopus customers.

As Featured In

Enterprise Power. Zero Enterprise Friction.

What you get with JetOctopus that others charge extra for or don't offer at all.

Typical Enterprise Tools

JetOctopus

Enterprise Power. Zero Enterprise Friction.

What you get with JetOctopus that others charge extra for or don't offer at all.

Typical Enterprise Tools

JetOctopus

Built for Large Sites. High Stakes. Real Data.

JetOctopus is designed for teams where a single indexation failure costs real revenue.

E-commerce & Marketplace Sites

Every unindexed page

is a missed sale.

- Run full-site crawls daily, not weekly

- See which pages Google and AI crawlers skip

- Unlimited users, one account

- Enterprise capability at a fraction

of the price

Multi-Client Management

One subscription, unlimited client domains. No per-domain or per-seat fees.

- No domain or project limits

- Set up logs once, they run forever

- Give clients read-only access at no extra charge

- Alerts catch and prioritize issues across all clients automatically

Technical SEO Specialists

Professional-grade tools.

No annual lock-in.

- AI Internal Linker free forever

- Cancel or pause anytime

- 400+ preset charts, ready to present

to clients - Full log setup support included

Get Found by Google. Get Cited by AI.

Enterprise-grade intelligence for revenue-critical SEO decisions.