The log file analyzer you’ve been looking for

built for technical SEO and log file analysis at scale, with real-time insights into how search engines and AI bots crawl your site.

Get detailed crawl log reporting with pre-built issue reports, visual dashboards and real-time data streams — so you can quickly identify and prioritize SEO issues.

Analyze AI Bots Activity

AI bots like ChatGPT, Claude and Perplexity are already crawling your website. They may use your content as a data source for AI-generated answers and JetOctopus makes this activity fully measurable now.

The AI Bots Analyzer report helps you track how often these bots visit your site, which pages they crawl, and what status codes they receive. This way, you can understand how AI bots interact with your content, control unwanted access, and spot new opportunities for visibility.

Identify Crawl Budget Waste

Crawl budget waste is a significant issue. Instead of valuable and high-priority pages, Googlebot often crawls irrelevant or outdated ones. Analyze crawl frequency, status codes and crawl budget waste by site section, helping you understand where bots spend time and where resources are wasted.

The Most Important Problems in Logs

Detect critical issues early, including indexing blockers, redirect chains, and inefficient crawling patterns before they impact your rankings.

JetOctopus tracks key log issues such as increased load time, unintended redirects, low-value page generation, AJAX URLs and more.

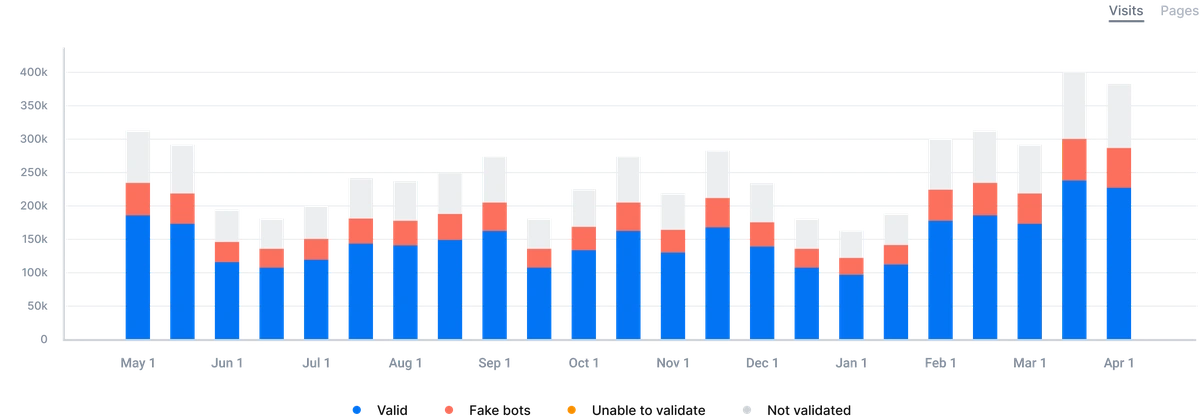

Analyze Crawl Budget Dynamics

Changes on a website can influence Googlebot's behavior - the frequency of its visits to the website. The increase of Googlebot's visits to the website is not always a good thing, it can be harmful. And it can be the opposite.

The Bot Dynamics report is the true helper in understanding changes in Googlebot's behavior and in identifying the critical issues when they just emerge. This way you avoid unexpected SEO traffic drops with the help of logs at hand.

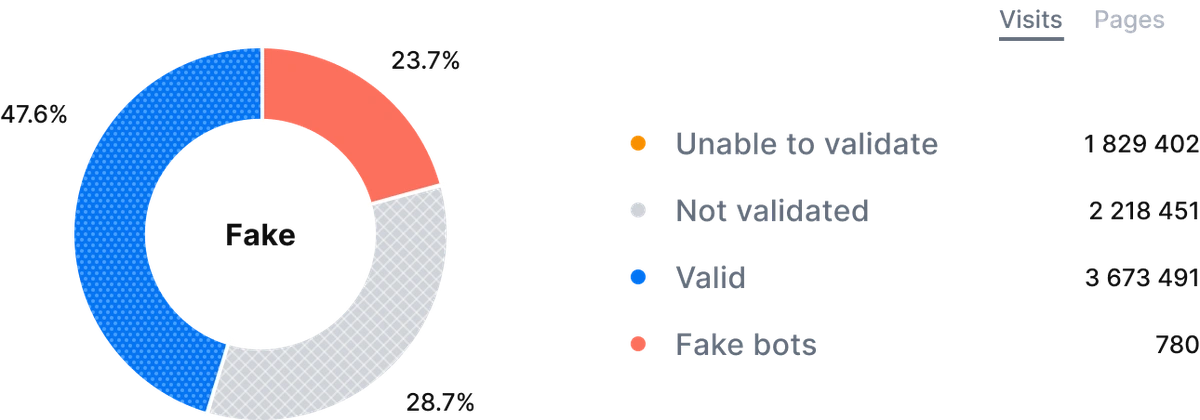

Dynamics of fake bots

SEO opportunities with Log files analysis

Improve Your Website’s Visibility

Check your website’s accessibility for proper indexation and ensure it’s easily found by users searching for your products and services.

Segmentation and Filtering

Use advanced segmentation to filter for bots and site sections, so you can analyze Googlebot behavior, isolate problem areas, and focus on high-impact pages.

Boost Organic Traffic

Eliminate and fix technical errors and other aspects of on-site SEO and get more SEO traffic. Tech SEO is always predictable.

AI Logs Analysis

With advanced AI log analysis, you can track how modern crawlers like ChatGPT, Claude and Perplexity interact with your site and uncover new visibility opportunities.

Increase Your Website’s Indexing

Optimize site structure, strengthen internal linking, enrich content, fix duplicates and refine indexation tags to gain a boost in SEO traffic.

Secure your SEO

Have at hand your raw logs and be the first who identifies the errors at your website to fix them right away before Googlebot visits them.

JetOctopus is a top solution for log file insights, trusted by technical SEOs to uncover crawl inefficiencies, improve indexation, and optimize large-scale websites.