The 2026 Technical SEO Playbook: Optimization for AI Crawlers & Agents

This article is based on the third and final part of the JetOctopus AI webinar series. You can see part one here and part two here.

SEO evolved. Search changed. The question is whether AI will choose you as the source of the answer.

In the third and final part of the JetOctopus AI webinar series, Stanislav Dashevskyi, Head of SEO and Customer Success at JetOctopus, presented the technical roadmap SEOs need to follow in 2026 to properly optimize for AI crawling and AI visibility. Based on real server log data from thousands of websites, this session focused on what actually works: crawling rules, site structure, JavaScript rendering, page speed, content optimization and the metrics that matter.

A quick recap: where we are

Before diving into technical specifics, it helps to remember the scale of the shift underway.

AI bots now account for roughly 40–50% of Googlebot-level activity across the web. But the breakdown is more important than the total: only 30–35% of all AI bot activity consists of training and search crawls. The remaining 65–70% is searches performed on behalf of real users – people asking questions in ChatGPT, Claude, Perplexity and similar platforms.

This means AI bots are not primarily archiving your content. They are actively researching answers for users right now. And if your site is not technically accessible to them, it will not appear in those answers.

As Stan put it: SEO is no longer about rankings. It is about your content being chosen as the source of an answer.

Three types of AI bots and why the difference matters

Not all AI bot visits are equal. Understanding the difference between the three types is the foundation of everything else in this playbook.

AI training bots collect content to educate the underlying model. They crawl broadly, ignore click depth, and are perfectly happy reading pages that are ten or more clicks from your homepage as long as the content appears valuable. A training visit means AI knows your content exists. It does not mean users will see it.

AI search bots crawl to find new URLs and fetch HTML for use in answers. Unlike training bots, they behave closer to Googlebot – they drop off quickly beyond two or three clicks from the homepage. Their job is to find fresh, accessible content and pass it to the answer engine.

AI user bots are the ones that matter most for visibility. These visits happen when a real user types a question into ChatGPT, Perplexity or Claude and the AI goes out to research the answer on their behalf. A user bot visit is the closest proxy to an impression in an AI interface. If your pages are not visited by user bots, they are not appearing in answers.

The critical insight from JetOctopus log data: your site can be heavily crawled by training and search bots and still be completely absent from AI-generated answers. User bot activity is what connects crawling to actual visibility and it is driven by speed, structure and content quality, not crawl volume alone.

Crawling rules: what AI bots actually follow

The first thing most SEOs get wrong is assuming that AI bots behave like Googlebot. They do not.

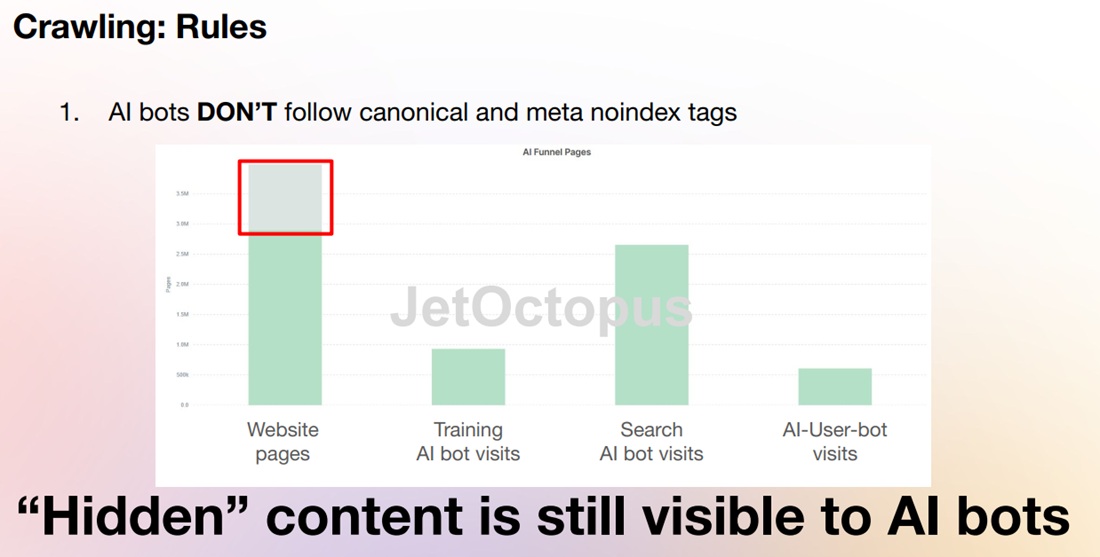

AI bots ignore canonical tags and meta noindex. These directives have no effect on AI crawlers. Content that you have technically hidden from Google – pages marked noindex, duplicate pages with canonical tags is still fully visible to AI bots. If those pages have internal links pointing to them, AI bots will find them and read them.

Robots.txt is the one file AI bots respect. All major AI platforms – ChatGPT, Claude, Perplexity, Gemini – follow robots.txt directives strictly. If you need to block AI bots from crawling specific sections of your site, robots.txt is the only reliable mechanism available. This is a significant shift: a file originally designed to manage image and script crawling is now the primary tool for controlling AI access to your content.

As a side note: LLM.txt does nothing. Despite growing interest in LLM.txt as a mechanism for guiding AI behavior, JetOctopus log data shows that major AI bots do not read this file at all. They do not hit it in logs. Don’t invest time in it.

Sitemaps: partially useful. ChatGPT and Claude use XML sitemaps to discover new URLs. Perplexity currently does not – it relies only on internal links. Sitemaps remain worth maintaining, but they are not a universal solution.

Site structure: the AI crawl budget problem

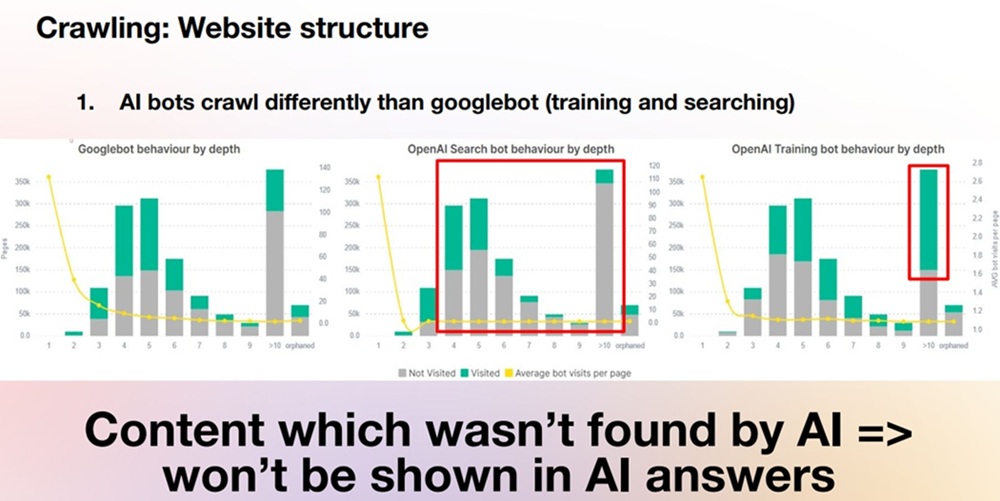

AI bots crawl differently than Googlebot, and understanding this difference has direct practical consequences.

Googlebot allocates substantial crawl budget to pages within 2 or 3 clicks of the homepage, revisiting them frequently. AI search bots behave very differently: by the time you reach pages 3 or more clicks from the homepage, average visits from AI search bots drop to roughly 1 per page. Deep content that Googlebot would eventually discover is essentially invisible to AI search crawlers.

AI training bots behave differently again. They are less concerned with click depth and more focused on content quality – they will crawl deep pages if the content appears valuable. But training visits alone do not generate user-facing visibility.

The practical implication is straightforward: if AI bots cannot find your content, it will not appear in AI answers, will not generate referral visits, and will not produce fan-out queries you can analyze and act on. Crawl budget for AI is currently very small, and the smarter you use it, the better your results.

Two rules follow from this:

- AI bots do not click buttons. Any content that requires user interaction to load – accordions, tabs, dynamic filters is invisible to AI bots. This is not new: Googlebot also does not click buttons. But with AI bots operating on a much smaller crawl budget, the cost of JavaScript-dependent content is higher.

- Internal links are the primary crawl mechanism. For some AI tools, internal links are the only way to discover new pages. This makes internal linking not just an SEO signal but a literal navigation requirement for AI visibility.

JavaScript: still a blind spot

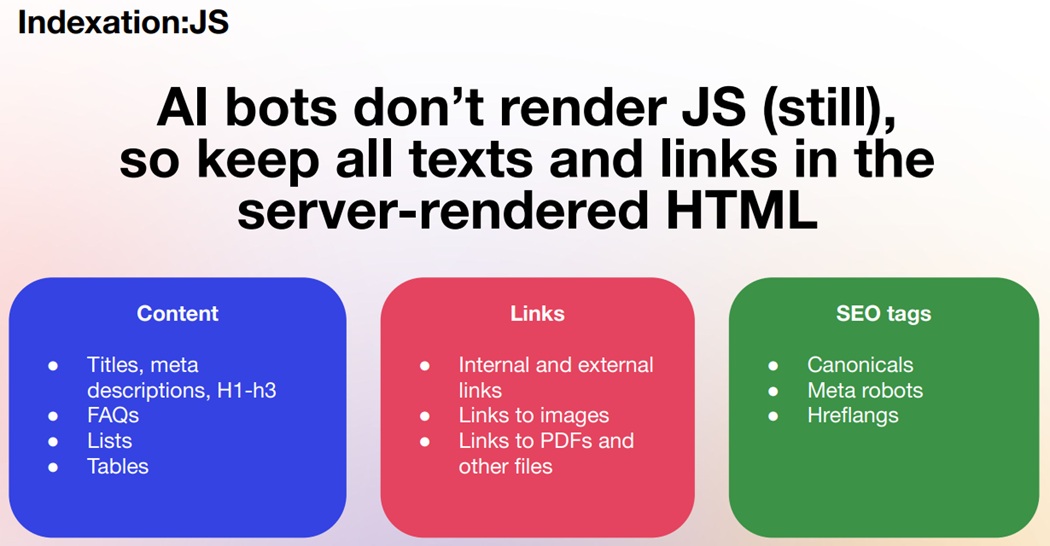

AI bots do not render JavaScript. This has been true for years and, with one partial exception, remains true in 2026.

The exception is Gemini. The latest version of Gemini has access to Google’s existing search index, which contains JavaScript-rendered content that Googlebot has already processed. Gemini can therefore access JS-rendered content indirectly, but it does not render JavaScript itself. Every other major AI platform treats JS-rendered content as if it does not exist.

The practical requirement is clear: everything that matters for AI visibility must be present in server-rendered HTML. This includes:

- Titles, meta descriptions, H1–h3 headings

- FAQs, lists and tables

- Internal and external links

- Links to images, PDFs and other files

- Canonical tags, meta robots and hreflang attributes

Content inserted via JavaScript is invisible to AI bots. So if your site relies heavily on client-side rendering, this is the most impactful technical issue to address.

JS rendered pages also create difficulties for Googlebot to crawl the pages: there is always a time lag between when Googlebot crawls page’s HTML and comes one more time but with a renderring bot. This waiting time may last weeks, with is crucial for large-scale sites.

It is also worth noting that PDF files are treated as independent content sources by AI platforms. ChatGPT and other tools read and parse PDFs well and PDFs can be cited separately from their host pages. If your site publishes PDFs, make sure they are linked from HTML and contain well-structured, accurate content.

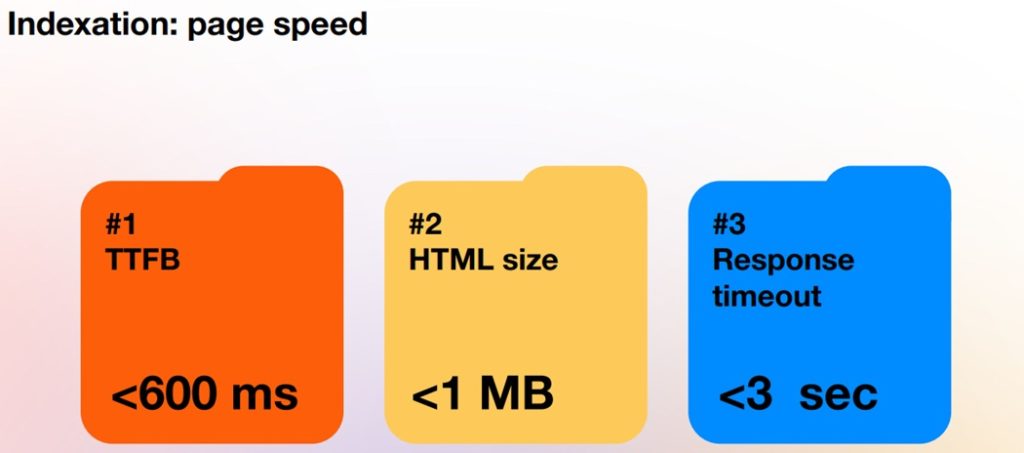

Page speed: AI bots are not as patient as Googlebot

AI bots were not built as crawlers. They were built as answer engines. As a result, they are less tolerant of slow servers than Googlebot, and page speed directly influences which pages appear in AI-generated answers.

Based on JetOctopus analysis of real server logs, three benchmarks define the threshold between pages that appear in AI answers and those that do not:

- Time to first byte (TTFB): under 600 milliseconds

- HTML file size: under 1 MB (ideally under 200 KB)

- Server response timeout: under 3 seconds

The data makes the pattern clear. AI search bots crawl broadly regardless of speed – they will visit fast and slow pages alike. But AI user bots, which determine what actually appears in answers, strongly favor fast pages. Sites whose pages load in under 600 milliseconds receive dramatically more AI user bot visits than slower pages.

In practical terms: ChatGPT crawls a lot, but it only uses the fastest pages in answers. Slow pages may be crawled but will not be selected.

Content optimization: what to improve

Technical accessibility gets AI bots to your pages. Content quality determines whether your pages are selected as answers.

Titles, meta descriptions and H1s. These remain critical signals. They should be clean, keyword-relevant and accurate. Use Search Console data to identify the terms your pages already rank for and ensure those terms appear in your tags. JetOctopus’s AI Page Optimizer allows you to do this at scale – identifying missing terms and updating tags across large numbers of pages directly from the interface.

Adding emojis or special symbols to titles and descriptions can increase click-through rate by approximately 8–10% according to observed data. This is a small but measurable gain worth testing.

On-page content and fan-out queries. Fan-out queries – the long, specific searches that AI tools perform on behalf of users are visible in Google Search Console. They typically are at least 8-9 words long and reveal what users are actually trying to understand: their questions, pain points or even comparisons with your competitors. Analyzing these queries and incorporating the topics they raise into FAQ sections and body content increases AI visibility directly, because you are answering the exact questions AI tools are researching.

Freshness matters. Content that is more than a year old is shown significantly less frequently in AI-generated answers. Freshness influences both the probability that AI selects your content and the frequency with which AI bots re-crawl your pages. Updating content is not just an SEO practice – it is an AI visibility requirement.

Internal linking: semantic cocoons. Internal linking for AI visibility goes beyond SEO fundamentals. AI tools use anchor text to decide whether to follow a link. If an anchor is descriptive and relevant to the page being browsed, there is a higher probability the AI bot will continue crawling through that link. Vague anchors like “click here” or “read more” provide no signal.

A useful framework here is the semantic cocoon strategy – grouping related pages together with descriptive internal links, and allowing cross-links to other related topic clusters. The more relevant content an AI tool encounters on your site, the broader your visibility and brand awareness across AI platforms.

Analytics: measuring AI efficiency

Standard SEO metrics – rankings, clicks, CTR – do not capture AI visibility. A dedicated measurement framework is needed.

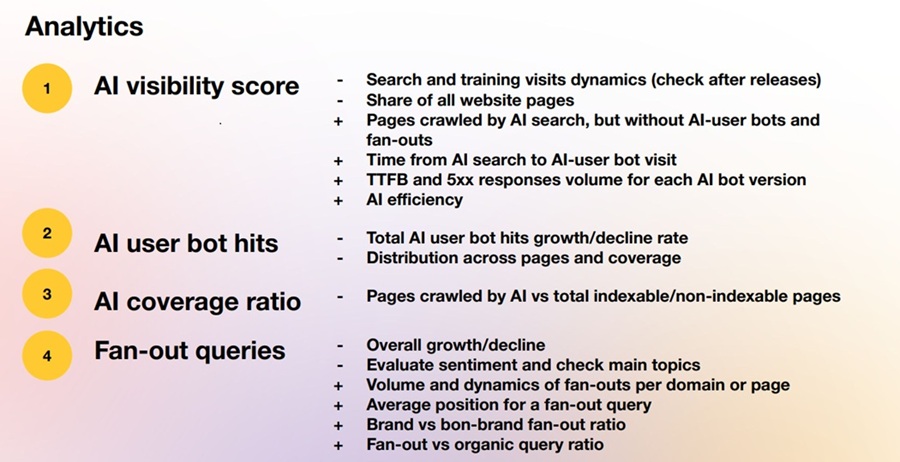

Based on the webinar, four key metrics form the foundation of AI visibility analysis:

AI visibility score. This combines server log data with fan-out queries and AI user bot activity to produce an efficiency metric for the site as a whole. It includes training and search visit dynamics, the share of pages reached by AI bots, TTFB and 5xx response rates by bot version and overall AI efficiency.

AI user bot hits. These visits are the closest proxy for impressions within AI interfaces. Tracking their growth or decline across pages reveals which content is being selected by AI tools and which is not.

AI coverage ratio. The proportion of pages that AI bots actually reach VS the total number of indexable and non-indexable pages. A low coverage ratio indicates structural problems – pages too deep, too slow or blocked.

Fan-out queries. The volume, sentiment and topics of fan-out queries tracked over time. Comparing fan-out query volume against organic query volume for the same pages shows whether AI visibility is growing or declining relative to traditional search. Branded fan-out queries are a particularly strong signal: they indicate that users are specifically researching your product or company through AI interfaces, which reflects genuine brand awareness growth.

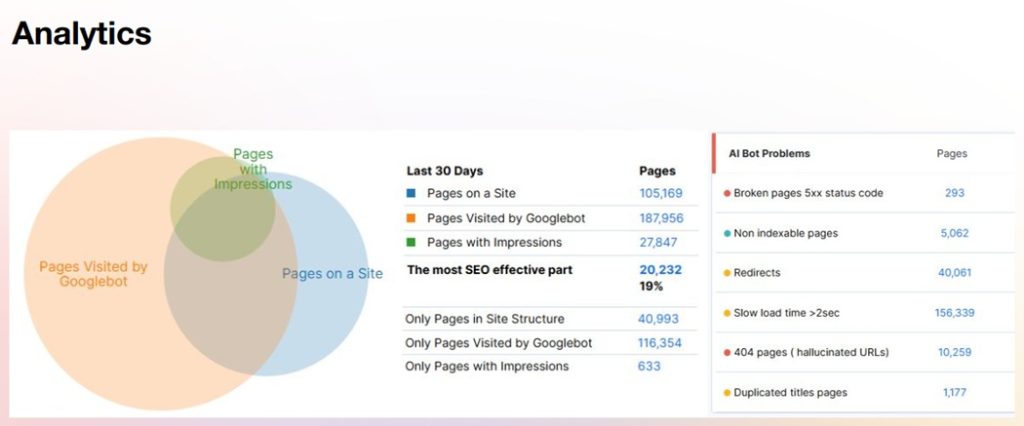

JetOctopus surfaces all of this data in a single interface: AI bot visits by platform, status codes, most visited pages, slow pages for AI, non-indexable pages crawled by AI and hallucinated URLs (404 pages that AI bots visit because they have constructed incorrect URLs). This last category is useful for identifying content gaps and redirect opportunities.

What AI will choose

The closing question of the webinar series was direct: will AI choose you?

Based on all the evidence, AI systems select content that is fast and technically accessible, easy to crawl through clean HTML and descriptive internal links, structured so that content and SEO tags are present in server-rendered markup, kept fresh and factually accurate, and organized around the actual questions users are asking.

Sites that meet these criteria will be shown to millions of users through AI interfaces. Sites that do not will be crawled but not selected – present in the data, absent from the answers.

The good news is that the requirements are largely the same ones that have defined good technical SEO for years. The difference in 2026 is that the cost of ignoring them is higher, the feedback loop is faster and the opportunity – being cited by AI tools to millions of users is larger than anything traditional search offered.