In this article, we will discuss the concept of an HTML sitemap and how to check it using JetOctopus. The primary purpose of an HTML sitemap is to provide convenient navigation for both users and search engines. It allows users to find desired pages with just a few clicks while enabling search engine bots to analyze multiple links pointing to other pages on your website. It is crucial to ensure that the HTML sitemap contains relevant and necessary data.

What is an HTML sitemap?

An HTML sitemap is a page (or multiple pages) that lists all the important links on your website. Its main function is to assist users in quickly navigating your website and finding specific sections or categories of interest. An HTML sitemap can also be used to highlight new pages, interesting categories, popular sections, or promotions, thereby improving overall website navigation.

In general, HTML sitemaps are designed to improve navigation on your website.

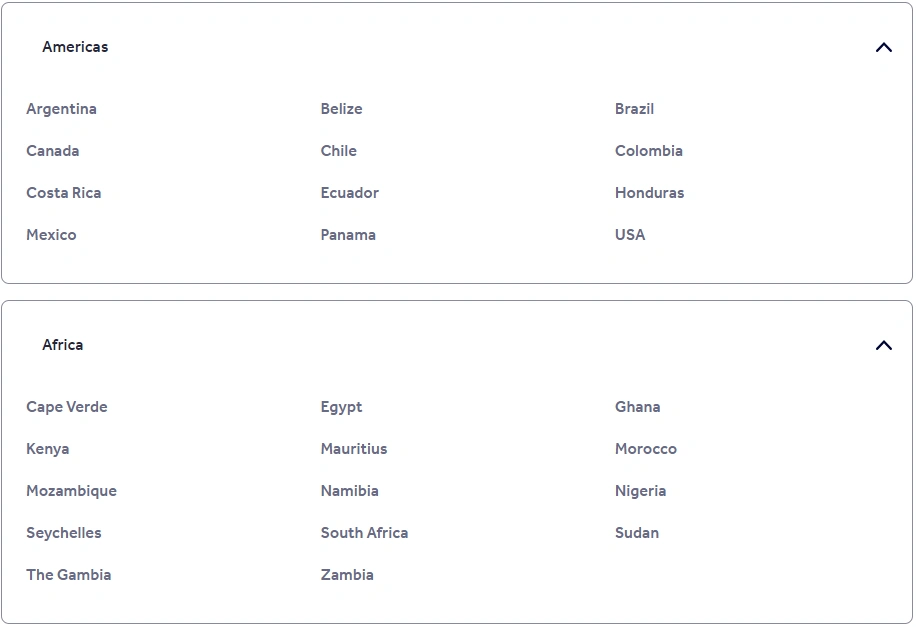

What does an HTML sitemap look like?

An HTML sitemap typically appears as a list of categories and sections of your website, accompanied by hyperlinks. It is important to note that all links within the HTML sitemap should be properly formatted using the <a href> tag to ensure compatibility with search engine bots.

To enhance user experience, it is advisable to include highlighted sections and important pages in the sitemap. Organize the links in a user-friendly manner, incorporating alphabetical or other indexes to enable users to easily locate the desired information. For websites with a large number of links, grouping them by subject and using headings to highlight subject names can be beneficial.

When constructing an HTML sitemap, it is not necessary to include every single link from your website. Focus on including the most relevant and important pages. For example, an e-commerce website can list categories from the top level to the bottom, while a hotel booking website can provide a list of countries and cities. Prioritize what is most relevant and beneficial to your users.

However, it is essential not to overload the HTML sitemap. If your website has many pages, consider implementing pagination, an alphabetical index, or other navigation aids to ensure smooth browsing.

Pagination in the HTML sitemap is very important if you have many links in this sitemap. The pagination should be crawlable by search engines so that they can go to the pagination page and crawl all the URLs there.

It is also worth noting that not all websites have an HTML sitemap. An HTML sitemap is optional. It is worth having other ways to simplify navigation for users. The site should have a beautiful and functional search, links to important pages in the header, full breadcrumbs and internal links to similar categories or recommended products.

What to check in an HTML sitemap?

HTML sitemaps are scanned by search engine bots and can serve as a source of internal links. Therefore, it is recommended to perform HTML checks on sitemaps to identify any issues that may impact the crawling budget or user experience. Conduct a website crawl to check the HTML sitemap, and within the crawl results, you can find all the necessary information about your HTML sitemap.

404 and other non 200 pages

This is a very important check, because bots, scanning the HTML sitemap, can follow the broken links. Broken URLs will also have a negative impact on the user experience: who will be happy if they get a 404 page instead of the information they need?

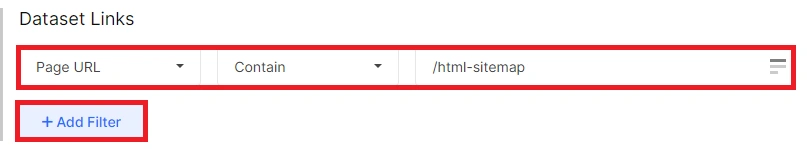

To identify such links, navigate to the “Links” data table.

Next, click on the “+Add filter” button. Select “Page URL” – “Contain” and enter the link of your HTML sitemap.

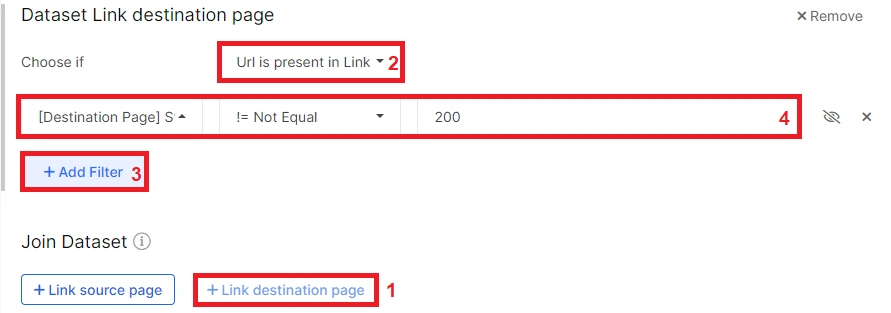

Next, in the “Join Dataset” field, add “Link destination page”. Click “+Add filter”. Then select “[Destination Page] Status Code” – “!= Not Equal” – “200”. Apply.

In the results, you will see links that returned a response code other than 200 and that were found in the HTML code of your website.

Is the HTML sitemap blocked by the robots.txt file?

If the HTML sitemap is blocked by the robots.txt file, search engines will not be able to scan it and get your website’s links.

To check if your HTML sitemap is accessible to bots, go to the “Pages” datatable and add the filter “Page URL” – “Contain” – a link to your HTML sitemap. You can add the filter “Is Robots.txt indexable is No” to see HTML sitemaps blocked by robots.txt filefind

Then click on “Setup columns” and select “Is Robots.txt indexable”. You will receive a separate column with information on whether the HTML sitemap can be scanned by bots. If you have several HTML sitemaps or pagination, you can check the scannability of all pages together.

Indexing

Indexing rules for HTML sitemaps can be different. There is nothing wrong with HTML sitemaps being indexable. But it is much more important for the HTML sitemap to have the attribute “follow”.

This can be checked by adding the columns “Is Meta Tag Follow” (or “Is X-Robots Header Follow”, if you specify indexing rules in the HTTP header) in the “Pages” datatable.

If the HTML sitemap is indexable, take care of a unique title and meta description.

Pagination

If your HTML sitemap includes pagination, verify that the links to the pagination pages are present in the HTML code and properly formatted using the <a href> tag. Additionally, confirm that the pagination pages are scannable and have the “follow” attribute.

Excessive number of links

Having too many links in your HTML sitemap can impact bot behavior and user experience. A large number of links increases the DOM tree, potentially degrading page performance. Low performance scores worsen the interaction with users and can affect the ranking of the page in Google, because Google takes into account the performance and loading speed of the page during the ranking. Also, a large number of unstructured links is difficult to perceive. In addition, if there are many outlinks on the page, the bots will not be able to process all of them, but only a small part. Therefore, it is better to divide the HTML sitemap into smaller parts.

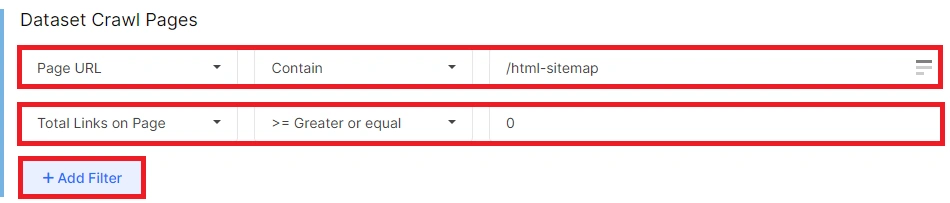

To check how many outlinks are in your HTML sitemap, filter the required HTML sitemap links in the “Pages” data table. Next, add another column “Total Links on Page” to see how many links there are on each page of your HTML sitemap. Also, you can use filter “Total Links on Page”.

Additional considerations for HTML sitemaps

If you utilize JavaScript, ensure that the indexing and scanning rules align.

Give priority to links leading to indexable pages within the HTML sitemap.

Keep the HTML sitemap up to date with the latest information to provide users with accurate and relevant content.

Conclusions

An HTML sitemap is a valuable tool for improving website navigation for users and search engines. By organizing links in a user-friendly manner, highlighting important sections, and providing relevant information, an HTML sitemap can enhance the user experience and facilitate search engine crawling.

When checking an HTML sitemap, it is important to ensure that broken links are fixed, the sitemap is accessible to search engine bots, proper indexing and scanning rules are followed, pagination is correctly implemented, and the number of links is reasonable to maintain optimal performance and user experience.

Regularly updating the HTML sitemap and adhering to best practices will help ensure its effectiveness in facilitating website navigation and enhancing overall SEO.