Case study. Medical Niche. Dramatic 40% SEO decrease

Medical portal with 700K pages and 2.7M monthly visits experienced 40% SEO decrease. We conducted a comprehensive technical audit to find the reason for the issue and developed a plan for technical optimization. Here are the most actionable insights.

How duplicates and 5xx bugs hurt crawl budget

What was done:

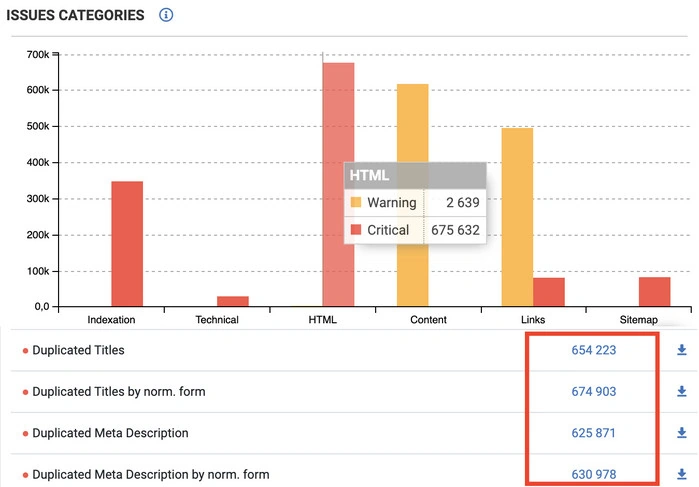

1. We conducted a comprehensive technical audit with crawl and logs analysis. Reports were overlapped to find technical bugs and understand how bots perceived these bugs. We revealed a bunch of duplicated HTML tags:

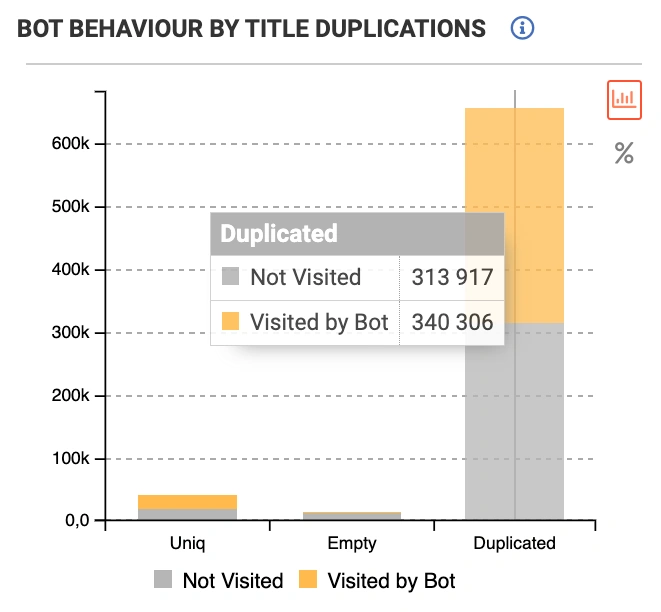

What is worse, bots visited pages with duplicate titles 15 times more often than pages with unique HTML tags:

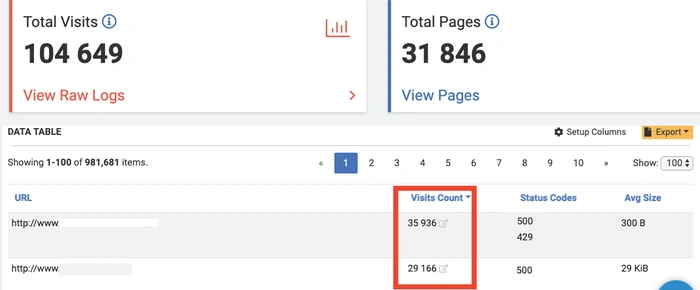

2. We analyzed raw logs to see how bots spread their resources on the website:

30-35% (!) of crawl budget was wasted on 2 URLs with 500 Status Codes.

Results:

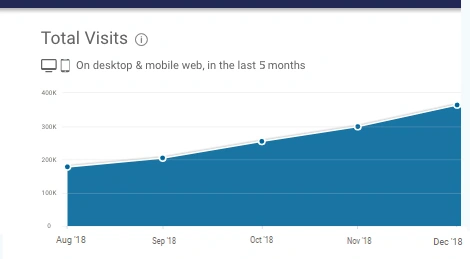

When the content team replaced a bunch of duplicated HTML with unique titles, webmasters fixed 500 response bugs, organic traffic was significantly increasing:

Read more: Preply.com. Client’s feedback. 1 year with JetOctopus.

SEO of medical portal about JetOctopus:

At present, JetOctopus is the unique crawler that could scan all webpages really fast. Usability of reports, it’s really easy to work with crawling results. Reports and datatables are formed really fast, a variety of useful options. I will definitely recommend JetOctopus crawler to my SEO friends. I give JetOctopus 9 out of 10 because I’m sure you can make your tool even better, but now your crawler is cool.