Page load time affects how often your website is crawled by search engines. The slower the pages, the less often the search engine will visit them. That is, pages that respond slowly during the visit of search robots negatively affect the crawling budget of your website. So, we recommend analyzing the slowest pages and optimizing their performance. Since this is an important issue, we have collected the slowest pages into a separate report in the “Logs” menu. This report is available if you have connected logs.

Step 1. Analyze the dynamics of load time.

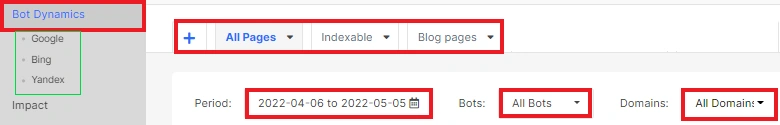

To do this, go to the “Logs” menu and select the “Bot dynamics” report.

Next, select the desired time period and the search engine you want to analyze. You can also choose a domain or a segment of pages.

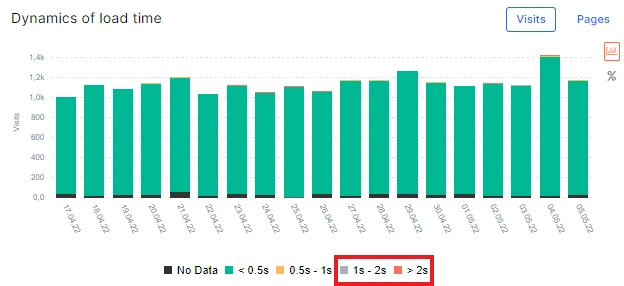

On the “Dynamics of load time” chart, you can see how the page loading speed has changed during the selected period. The slowest pages are marked in red and gray.

You can choose which statistics you want to see: by visits or by pages. Clicking on a part of the diagram will take you to the data table.

Please note that this chart does not show the rendering speed, but the server response speed, that is, how much time passed from the request of the search robot to the time the server returned the requested page.

Sudden bursts of slow loading pages may indicate the problems with the server or with your web pages during the selected period.

Step 2. Analyze the statistics of slow and extra-slow pages.

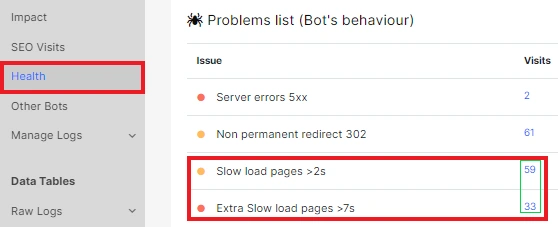

Go to the “Health” report in the “Logs” menu to see the total number of slow and extra-slow pages. If, during the period you selected, search engines visited pages with a loading time of 1-2 seconds (slow pages) and more than 2 seconds (extra-slow pages), you will see their number in the “Problems list (Bot’s behavior)”.

Clicking on the number next to the problem will take you to the data table.

Step 3. Check the slowest pages of your website visited by search robots.

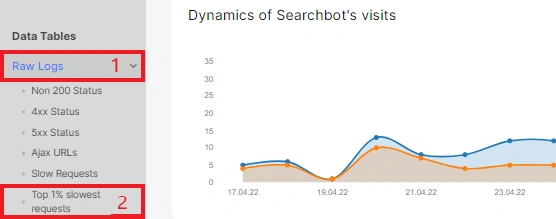

Go to the data tables and select “Raw logs”. Then select the “Top 1% slowest requests” report from the drop-down menu. Here you will see the 1% of pages with the slowest load time during bots visits.

Please note that we do not filter pages here by exact loading time, but show the slowest ones. Even if your pages are fast enough, here you will find the slowest ones.

Step 4. Analyze pages with load time greater than 1 second.

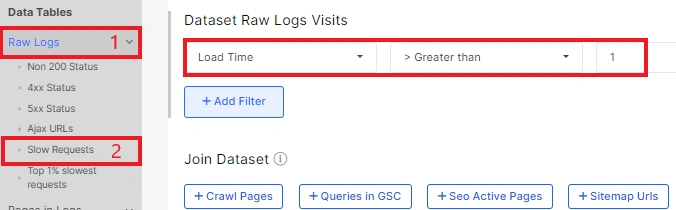

A load time of more than 1 second is quite slow for search engines which are always trying to save resources. Therefore, it is worth analyzing this pool of pages. This data table is available in “Raw Logs”.

Here you can see all visits with slow load and even a chart with its dynamics.

One page can be visited by a search bot several times. If it responded for more than 1 second on each visit, you can find all those visits as separate rows in this data table.

Step 5. Analyze pages that are always slow to load.

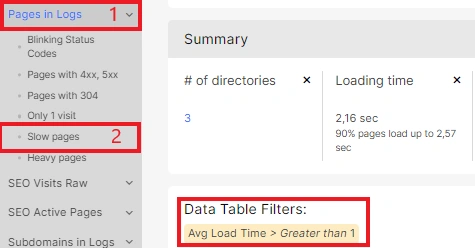

Load time can be different for the same page under different conditions. However, there are pages that always have a critical or slow load time. You can find such pages in the “Pages” data table, “Slow Pages” report. Here we show a list of pages with an average load time of more than 1 second.

You can additionally analyze pages with an average time of 2 seconds or more, if you noticed such a problem during the previous steps.

It is likely that these pages will have slow load times if the bug is not found and fixed.

You can export all data in a format convenient for you.