What You Should Know About rel=next and rel=prev Google update

Recently Google Webmaster Central Office announced a series of SEO updates with a hashtag #springiscomning in Twitter. News about the new way Google will treat pagination affected SEO community greatly. Let the dust settle and get to the bottom of this news.

Winter Spring is Coming!

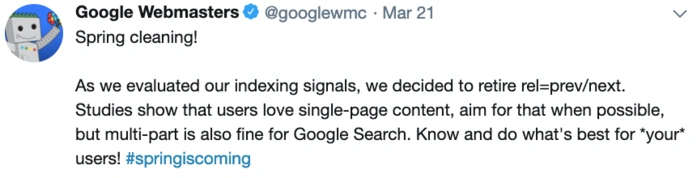

On March 21, 2019 @googlewmc posted the following tweet:

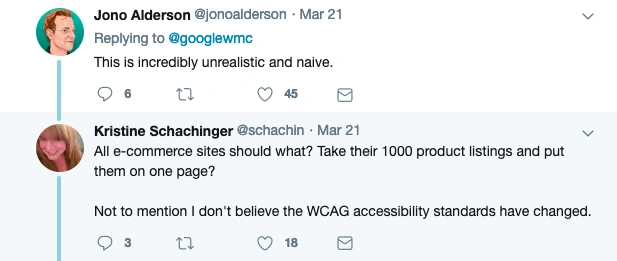

Vague information led to misunderstandings:

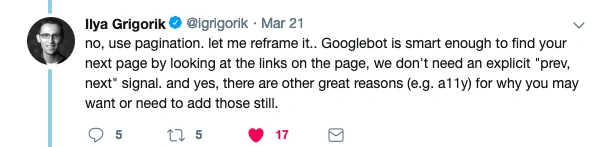

Google’s web engineer Ilya Grigorik gave explanations:

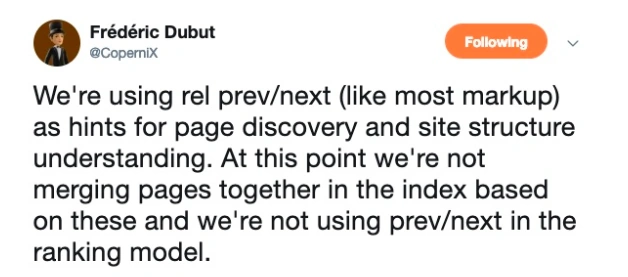

In other words, you don’t need to delete all rel=prev/next links you’ve implemented for so long. Note that these links attributes are a part of a web standard and that there are other search engines bots besides Googlebot. For instance, Bing ’s webmaster Frédéric Dubut mentioned the search engine is using rel=prev/next as a hint for crawling pages and understanding the website structure, but not to group paginated pages or rank them.

Since these links attributes are a part of the W3C standard and not just Google created, it’s best to keep everything as it is. Anyway, it’s a good time to analyze your site structure comprehensively as Google’s understanding of website hierarchy is being improved.

Are there any changes in JetOctopus algorithms?

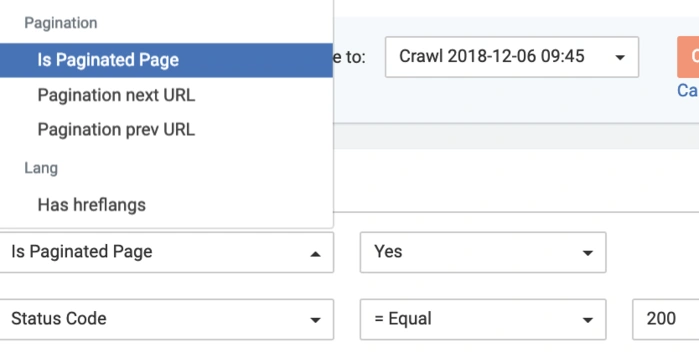

JO crawler discovers paginated pages with the help of rel=prev/next links attributes:

For now, JO tech team thinks to keep everything working the way it does at the moment. Pagination rel=prev/next signals are still useful for other search engines — and, if paginated pages are just ‘normal pages’ now, then it makes it even more important not to noindex them.

Get more useful info How to Create a Site That Will Never Go Down