Looking at the websites that get a lot of traffic some might think that this is due to their technical perfection. Others are convinced that the older such websites are, the more traffic they get thanks to age and trust. Let’s check who is right with quickly developing fields in e-commerce – fashion industry.

To do that we chose the leading British web-stores of 2017 that get most huge amount of traffic and crawled 1 mln pages of each.

- H & M

- Asos

- Uniqlo

- Nordstrom

- Gamiss

Settings:

- limited to 1 mln pages, unlimited depth

- following the instructions of robots.txt and meta=robots

- the main subdomain only (www version included)

- sitemap not processed

Sites sizes

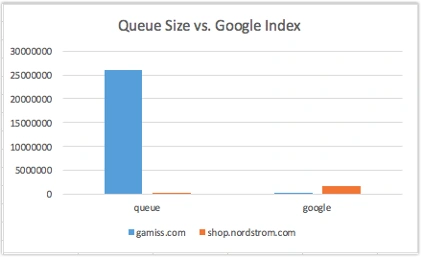

SEO experts often say ‘big sites’ and ‘small sites’ judging by the number of pages indexed by Google and take this information into consideration when optimizing its structure, interlinking and etc. But having analyzed the pages with our crawler we can see quite a different picture. For instance, after having crawled 1 mln pages of gamiss.com we found 26 mln pages in the queue while the number of those at shop.nordstrom.com was only 271 thousand. But gamiss.com had only around 350 thousand pages in Google index, while shop.mordstrom.com had 1.7 mln.

Thus you can see that you can’t figure out the real size of a site taking into account the data from Google index only. It often happens, and is especially common with web-stores, that Google indexes only one-tenth of the found pages.

Technical condition

It is obvious that the more convenient a web-store is – the higher its conversion rate is. In case of e-clothes shops there’s an extra problem with an enormous number of images. As a result a page with all the resources may weigh 1, 2 or even more mB.

Our study showed that each site turned out to have 5xx and 4xx pages. Absolute “leaders” became uniqlo.com with 92 thousand 5xx errors and shop.nordstrom.com with 94 thousand 4xx errors. The rest of the sites had less than 1% of problem pages of the total number.

As to the load time the slowest ones were urbanoutfitters.com – 93% of pages loaded more than 2 seconds, and hm.com – 13%. The other sites are rather well optimized in speed.

Content

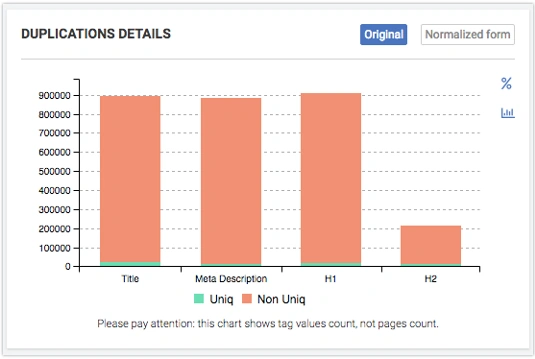

One of the most crucial content problems is duplicated pages – two pages are entirely identical. For over a year of working with various web-stores we seldom faced such a problem. But in the context of our research it is rather acute:

Hm.com – 6.21%

www.asos.com – 7.15%

uniqlo.com – 2.27%

shop.nordstrom.com – 1.91%

The other sites have got less than 0.1%.

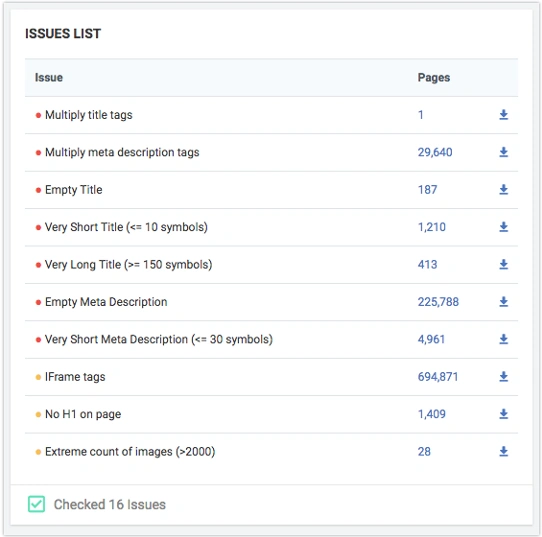

Typical content problems

There’s another important problem – thin (too little content) or broken pages – the robot finds fragments of ajax pages without html->body layout. We always sort out the pages containing less than 100 words; 8 times out of 10 such pages have got issues. Here are the results:

Shop.nordstrom.com – 2.19%

Hm.com – 1.68%

Uniqlo.com – 1.11%

The other sites have no such pages.

Almost none of the given web-stores uniqualize the title of the filter pages; the average number of duplicate title is from 60 to 90%. The exceptions are only shop.nordstrom.com with 13% and hm.com with 33% of duplicates.

Hreflang

Managing language versions is perhaps one of the most difficult aspects of technical SEO and of software support as well. In his article, Glenn Gabe describes one of the problems that might arise with hreflang arrangement

In our case the majority of the web-stores using hreflang have got classical problems: the landing page is a redirect page or even closed for indexing. Hm.com has almost 81% of hreflang pages closed for indexing; at uniqlo.com there’re 84% of pages having links to redirect or broken pages.

Indexing management

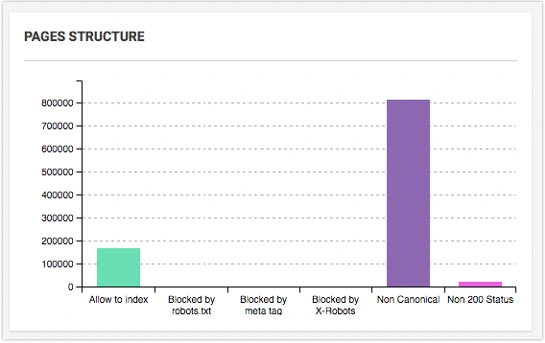

The main feature of any web-store is a large number of non-canonical pages, up to 70-90% usually.

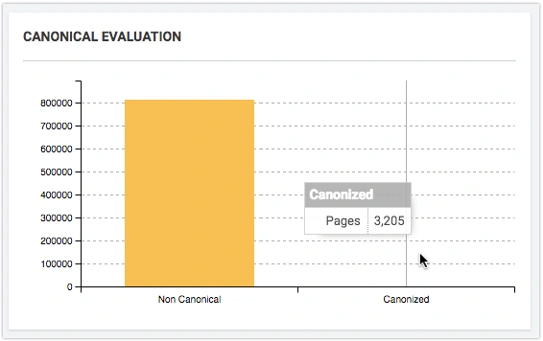

This happens due to filters being built in accordance with the product characteristics. For example, at gamiss.com 810 thousand non-canonical pages canonicalize only 3 thousand pages (0.4%).

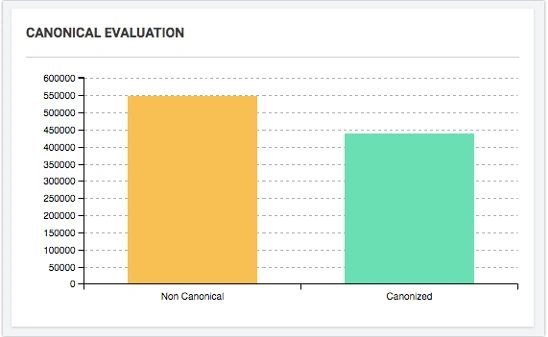

The situation is reverse at trendyol.com – 547 thousand pages canonicalize 439 thousand (80.2%).

When creating a site you decide to use non-canonical pages as a means to prevent them from being indexed don’t forget that this is not a strict rule, but just a recommendation for a search engine.

Conclusion

As our analysis shows technical SEO of big websites often leaves much to be desired. In the context of fashion web-stores it is not SEO that attracts traffic, but brands. Nevertheless almost each site has got critical technical errors that need to be eliminated right now.

We can also see from our analysis the older the site is the more 4xx and 5xx pages it has. Some SEO experts say that you needn’t worry as long as Google Webmaster doesn’t send an error notification. However we’d like to remind you that Google Webmaster sends you such message after having indexed those errors and after having penalized your website for them. You can’t disagree that knowing about errors before they are indexed is much better.

Googlebot works really hard crawling the site, but only 10-30% of its work get indexed. That’s why big sites need to constantly keep an eye on the indexing process and crawling budget, they need to clearly understand how Googlebot works.

Read more: Why Partial Technical Analysis Harms Your Website.