JetOctopus supports crawling URLs of various pages and types, so you can see the “Non text/html page” status code in the crawl results. In this article, we will explain what this status code means and how it affects SEO.

What is Non text/html page status code

The Non text/html page status code indicates that the crawler has accessed a URL that is not an HTML document. Typically, all URLs scanned and indexed by search engines are HTML documents or images. However, there are other types of resources that can be scanned by both the JetOctopus crawler and search bots.

Such resources include:

- forms for users;

- text files and tables uploaded to your domain;

- other types of files are uploaded to your website.

For instance, the URL https://example.com/confirmation.docx is a text document uploaded to your domain. When you click on such a link, the document may start downloading to your device or open in the browser. These links are categorized as “Non text/html page” resources in crawl results. On the other hand, the link to a Google Sheet will not be classified in this category. However, you can find all links to your company’s Google Drive in the external links data table.

Why does JetOctopus crawl such pages

During the crawling process, JetOctopus have the behavior of search robots. This means that JetOctopus scans all internal URLs that are found inside the <a href> element. If there are links to documents in the HTML code of your pages, JetOctopus will follow those links and scan the title, meta description, and other relevant information.

Why is it important to analyze Non text/html pages

As we mentioned earlier, JetOctopus behaves like search robots during crawling. Consequently, crawlers can also identify and crawl the same files.

This implies that search engines might waste the crawling budget towards loading and processing non-text/html pages such as pdf and text files. However, some of these files may be considerably large in size. It is worth noting that the crawl budget is also determined by the number of bytes that Google Bot can process. Therefore, when assessing such files, it is crucial to determine their importance and relevance to SERP rankings. Consider whether you want search engines to download these files and expend the crawl budget, particularly if the files are large.

Of course, analyzing non-text/html pages is critical from a user’s perspective. Not all users want to download files to their mobile device or computer. Additionally, it is critical to monitor the link’s behavior: whether the link will open in a new browser tab or the same tab. Therefore, we recommend examining the behavior of such files to ensure that they do not inconvenience users.

How to find Non text/html page on your website

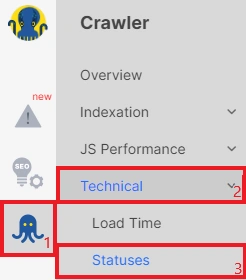

Go to the crawl results and select the “Overview” dashboard go to the “Technical” dashboard and select “Statuses”.

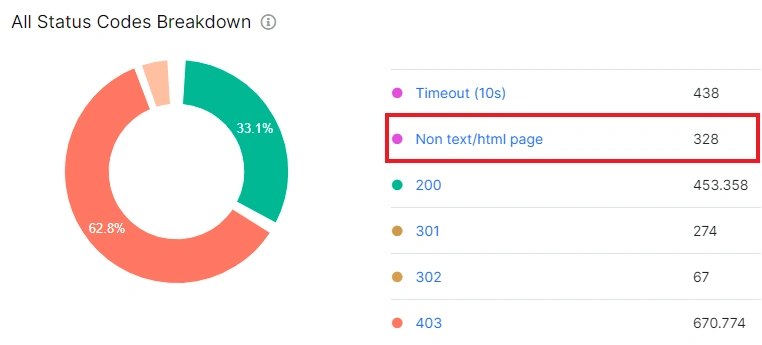

Look for the “Status codes” chart, which displays any non-text/html pages detected during the scan.

To obtain more detailed information, click on the desired chart section or on the “Non text/html page” option next to it. It is recommended to keep track of the number of active files on your website, which should not exceed the number of normal HTML pages.

In the data table, you apply the necessary filters. For example, filter all files in txt format.

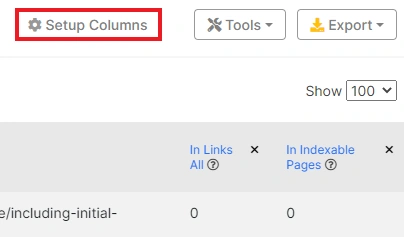

You can also customize additional columns by clicking on “Setup columns” and selecting desired options, such as page size, title, number of load tries and so on.

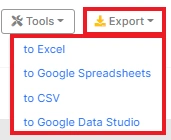

Finally, all data can be exported in a convenient format or uploaded directly to Google Data Studio (Looker).

What to pay attention to when analyzing non text/html pages

To ensure optimal use of non text/html pages, we recommend paying attention to the following points:

- file size: large files waste crawling budget and can consume user Internet bytes;

- title: ensure non text/html page titles accurately describe their content;

- content;

- mobile usability;

- indexing rules: files can be indexed by search engines, so ensure indexable files are relevant to users queries;

- anchor text pointing to the file.

The anchor text is crucial because users need to know what will happen when they click on a Non text/html page link.

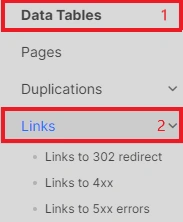

To find the anchors of non text/html pages, go to the “Links” data table.

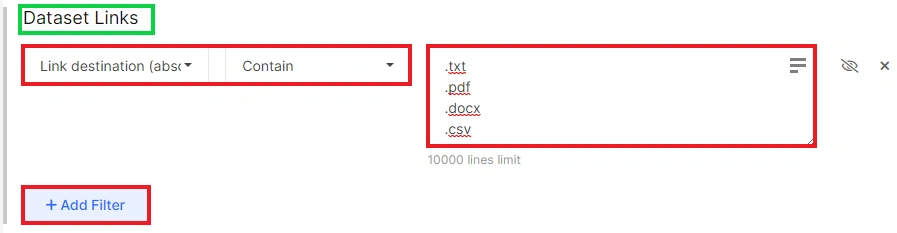

Next, add the filter “Link destination (absolute URL)” with type of file you need.

Then apply needed filters to find links to desired file format. For example, [Destination Page] URL – Contains – .doc.

We also suggest analyzing how frequently search engines are scanning non text/html pages. This can be accomplished by analyzing the logs and using the “URL” – “Contains” filter to compile a list of all file formats accessible on your website. It’s essential to keep an eye on the load time of these files and the status codes that Google Bot is receiving.

You can augment this information with data from Google Search Console to estimate the proportion of non-HTML pages that are being crawled by search engines. To see how much of the crawl budget is being used to scan text files, tables, and other documents, go to Settings – Crawl stats – Crawl requests: Other file type.

Analysis of status codes and scanned files and resources is an important part of technical SEO. Therefore, in addition to text resources, we recommend that you pay attention to broken links, 5xx status codes and links to 301, 302 pages. Find more information about all status codes in the article: “Status codes: what they are and what they mean“.

You can also import your own data for scanning and checking.