If you have crawled the same website twice or more, you may notice that the crawl results are different. In this article, we will explain why this happens and what to keep in focus when analyzing differences in crawls.

Next, we will describe in points the possible reasons why the results of crawls differ.

Different crawl settings

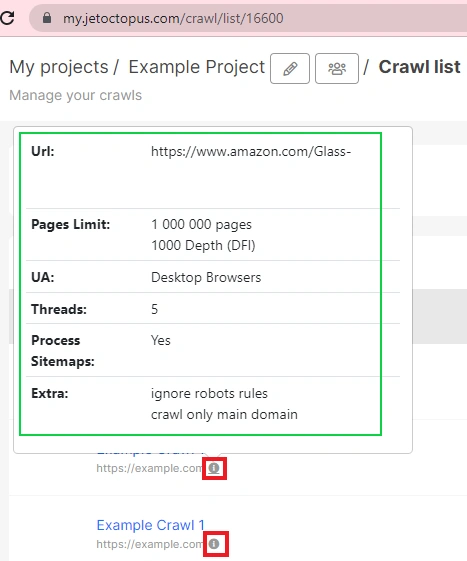

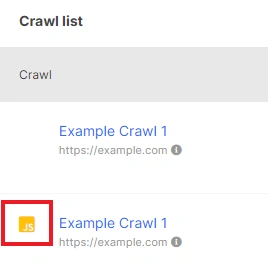

The first reason why the results of crawling of the same website are not the same is the different configuration of the crawl. To check what settings were made during the crawl, go to your project, where there is a list of crawls.

Click on the information icon next to the desired crawl. And a pop-up window with crawl settings will appear.

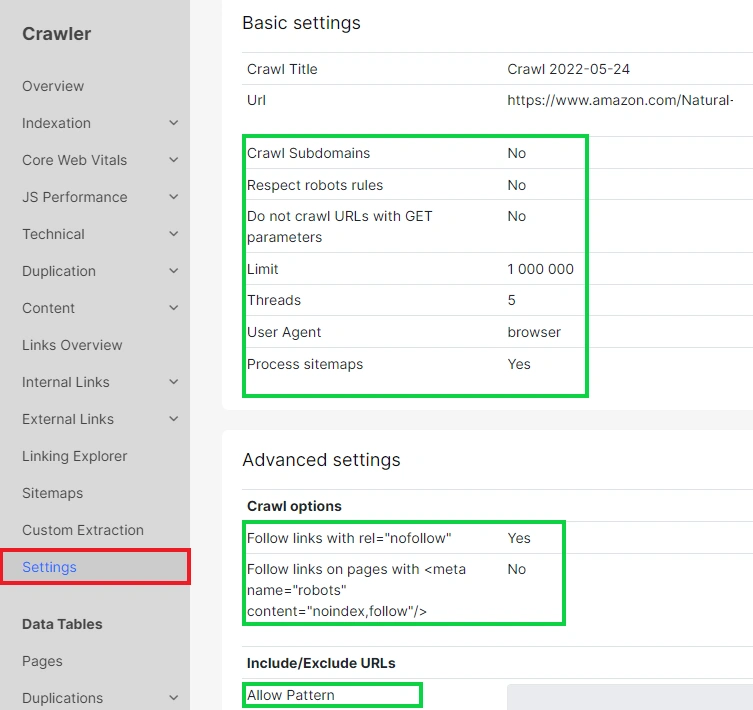

Also, you can find crawl settings in a separate “Settings” report in a “Crawler” menu.

Note the following points:

- page limit – if different crawls have different page limits, as a result, you will see a different number of pages in the results;

- page depth – shows how deep the crawler should go through the levels of the pages. If one crawl has “3” in the settings and the other has “1000”, the first crawl will have fewer pages, because crawler will not scan URLs below the third level of website’s hierarchy;

- browser type – if you have all the same settings, except for the browser type, this is an important point for analysis. After all, if one of the versions, mobile or desktop, has fewer pages, it means that search engines will also receive fewer pages. For example, if links appear on your mobile version after a user interacts with a page element, neither our crawler nor the search engine will be able to find those links. In this situation, you should make the URLs on the mobile version available in the code of the pages without interaction;

- sitemap processing – sitemaps may contain orphan pages, so if you activate the “Process sitemap” checkbox, there may be unique URLs in the results of crawl with sitemap processing.

More information: How to find orphan pages with JetOctopus.

Also pay attention to the “Respect robots rules/ ignore robots rules” settings. If you have activated the “Respect robots rules” checkbox while setting up the crawl, our crawler will follow all the directives for search engines and will not scan URLs, which, for example, are blocked by the robots.txt file. Therefore, the results of crawls with different rules for robots will be different.

Make sure you choose to crawl the main domain or subdomains and the availability of a custom robots.txt file.

You will find all the items that can affect the results of crawling in the information window or in “Settings” report in crawl results.

Updates of the website

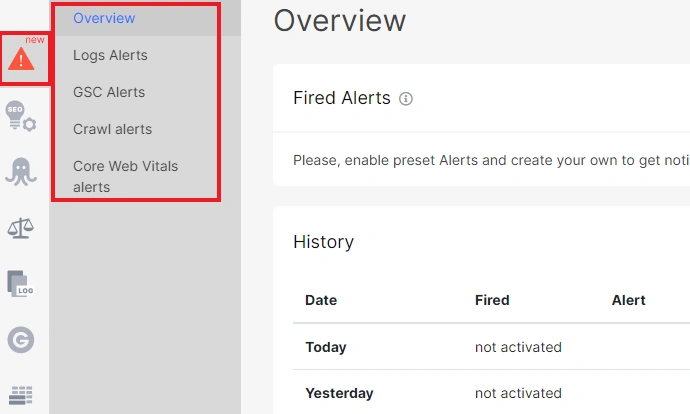

Crawl results often differ because websites were updated. This is especially relevant for large and e-comm websites. You can track whether critical updates for SEO have changed as a result of the update by setting up alerts.

Sitemaps have been updated

If you select “Process sitemap” during crawl settings, and your sitemaps are updated, the crawl results will be different.

You have set a small page limit for a large website

If you have a large website that has many URLs on different levels and a wide horizontal structure, then the results may differ with the same crawl configuration and the same page limit. For this reason, we recommend a complete crawl for websites of all sizes.

More information: Why Is Partial Crawling Bad for Big Websites? How Does It Impact SEO?

Some pages were unavailable during crawling

If some pages returned 5xx response codes during one of the crawls, our crawler could not access the page code and couldn’t get the list of links from the code. 5xx status codes are temporary and may indicate server overloading, in particular, by our crawler. Check the crawl results for 5xx pages. The results of the crawls will be different if there are 5xx status codes.

More information: What if you run a crawl and your website returns the 503 response code?

JavaScript – HTML

The results of two crawls may differ if you chose “Execute JavaScript” for one crawl and not for the other. JavaScript crawls are marked with a JS icon in the list of crawls.

More information: Why do JavaScript and HTML versions differ in crawl results?