According to statistics, medium-sized websites are most often attacked by fake and malicious bots. Therefore, it is important to monitor the scanning statistics of not only verified Google, Bing and other search engine bots, but also to detect and block malicious bots. In this article, we will tell you how to detect such bots using JetOctopus.

What are fake bots?

Fakebots are automated bots that visit websites for various purposes. Some fake bots simply collect information or scrape data from your website. This scraped information can be used by competitors to analyze your prices or content.

Some fake bots are more harmful and can pose a threat to your website.

And any fake bots can overload your website. Then neither users nor valid search bots will be able to access your website.

Often, fake bots disguise themselves (or emulate) as search bots and use the same user agent data as verified search engines. So, it can be difficult to detect them. If you are absolutely certain that the unusual activity is being generated by a fake bot that uses a Google user agent, you can verify this bot by sending a reverse DNS lookup (or reverse IP lookup). You can also use the Googlebot verification tool in JetOctopus.

More information: Product Update. Verifying Googlebot with JetOctopus.

Why you need to pay attention to the activity of fake bots

Fake bots can be a huge problem for your website. Fake bots can very quickly go through different pages of your website to gather the information or create a heavy load on your web server. Most bots perform automated actions, but some of these bots can imitate the behavior of search engines. Another part of fake bots can imitate the behavior of users and add products to the cart, fill out contact forms, book meetings etc.

In any case, each fake bot carries potential losses for your website through marketing channels, and can also distort the analytics of the activity of search robots.

The worst situation is when fake bots overload your website so much that the server starts returning 5xx status codes. Therefore, your website users will not be able to either add items to their cart or enjoy your great content. Search engines will also not be able to crawl your website. After 5xx status, search bots will reduce their crawl budget, which can negatively affect organic traffic.

How to analyze fake bots with JetOctopus

Using JetOctopus, you can analyze the activity of fake bots. First of all, we focus on bots that imitate Google and other search engines.

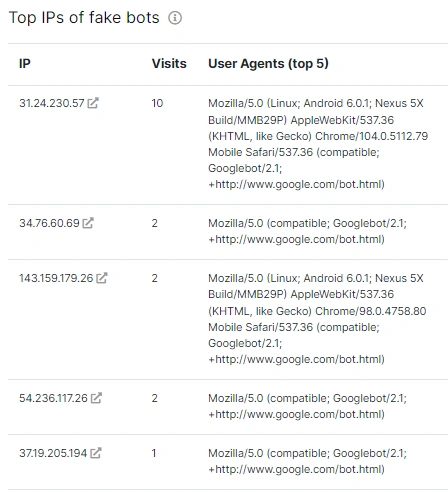

However, you can also find in JetOctopus a list of IP addresses that most often visit your website and do not belong to search engines. Compare the activity of these IPs and the activity of real users. Usually, user’s IPs cannot visit the website so often, so we can say with certainty that these IPs are definitely fake bots that should be blocked. But first exclude from this list of IP addresses your corporate IPs and IPs of useful services that you or your colleagues use.

To find a list of IP addresses that most often visit your website, you need to connect logs. After integrating the logs, go to the appropriate section and select the “Other bots” report.

In the “Top IPs of fake bots” list, you will see a list of bots imitating search engines, the number of visits for the selected period for the selected domain (all these settings can be made at the top of the page) and the user agent.

The bigger and more popular the website, the more fake bots there will be on this list.

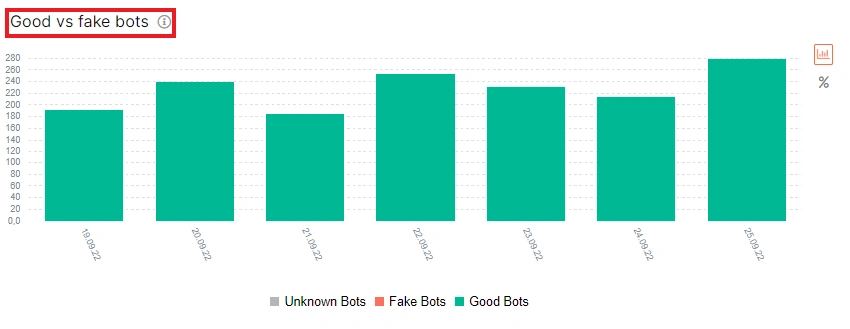

And now let’s move on to the analysis of the behavior of fake bots. In the same report, there is a “Good vs fake bots” diagram, which shows the activity of fake and valid bots in dynamics. Valid bots are marked green, fake bots are marked red.

All columns are clickable: clicking on a column will take you to a data table with a list of visits by valid or fake bots.

Pay attention to the dynamic changes in the activity of verified bots if your website was attacked by fake bots during the same period or before. As you can find out, fake bots have a direct impact on your crawl budget.

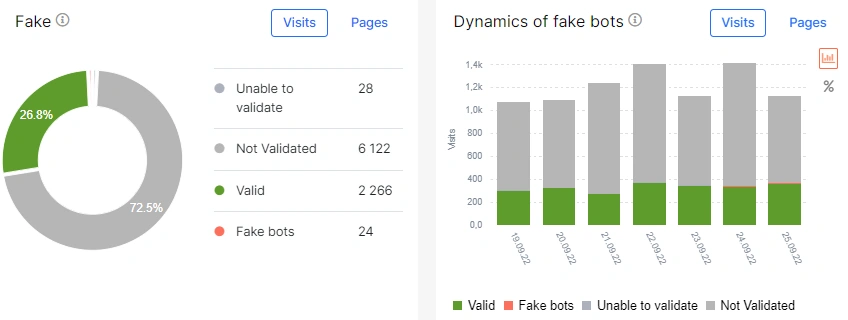

We also recommend analyzing the data in the “Logs” – “Overview” report. On the charts “Fake” and “Dynamics of fake bots” you will find the ratio of visits by verified and fake bots that emulate search engines.

It is worth closely monitoring these statistics and blocking fake bots in time.

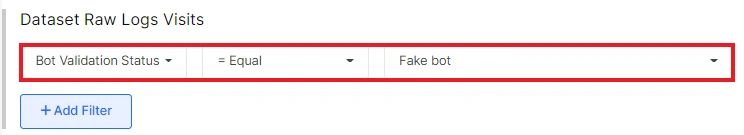

For a detailed analysis of fake bots and their activity, go to the data table and set the filter “Bot Validation Status” – “= Equal” – Fake bot”.

If necessary, configure additional filters, for example, select a certain type of pages if you want to analyze bot activity on these pages.

All data can be exported in a convenient format.

How to block fake bots

Once you identify fake bots, you need to block them. Both manual IP blocking at the server level and automated solutions can be used.

Automated protection systems usually send a reverse DNS lookup to the user agent if he uses search engine data, but not a verified IP address.

It should be taken into account that fake bots, after being blocked, will always try to find a way to start attacking your website again, so fake bot monitoring should be done constantly.