Analysis of the activity of search robots is an important part of technical SEO. Some robots may visit your website too often and overload your web server. At the same time, the number of sessions and conversions with this search engine may be close to zero. Therefore, the activity of such search robots should be limited.

Read more: How Googlebot almost killed an e-commerce website with extreme crawling.

In addition, there are various fake bots emulating real search robots. By blocking fake bots, you can also save your web server resources and ensure website security.

If you’re interested in search robots, you’ll love our research: Internet of Bots: A Quick Research on Bot Traffic Share and Fake Googlebots.

With JetOctopus, you can easily analyze and find out what types of robots are visiting your website. For this you must have integrated logs.

How to find out what types of robots visit your site

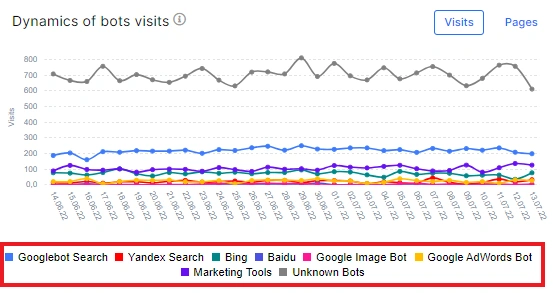

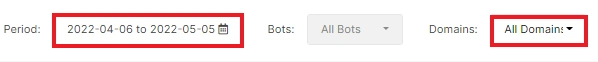

We pay a lot of attention to search engines (and not only) and have a lot of reports that show the activity of various bots. We recommend starting with the “Overview” report in the “Logs” menu. On the “Dynamics of bots visits” chart, you can see the dynamics of the visits of the most known bots, namely:

- Googlebot Search (includes Mobile and Desktop) – verified bots;

- Yandex Search – verified bots;

- Bing and Baidu verified bots;

- Google Image Bot – Google search robots that process and index images;

- Google AdWords Bot – analyzing the activity of this bot is very important if you use paid advertising to promote your website;

- Marketing Tools – various services and tools regularly scan your website to find external links and collect information about pages on your website; here we include only proven robots, for example, Ahrefs, Majestic and so on.

- Unknown Bots – various unverified robots crawling your website.

You can see the dynamics of visits on the chart. If a robot is too active and not important for your website traffic, you can block it in the robots.txt file, using server settings, CloudFlare, or other security services.

Please note that you can filter the data by date and by domain.

Also in this report you can find a pie chart with the ratio of visits of all robots. This is an extremely important chart that shows which robots are putting the highest load on your website. These are usually not search engines, but fake bots or marketing tools.

Clicking on a type of robot or on a segment of the diagram will take you to a data table where you can analyze the behavior of each type of robot in detail.

Keep in focus:

- which types of pages are most often visited by search robots;

- which referrers contain;

- which user agents visit your website (one robot can have several user agents, for example, Android and iOS).

This information will help you build a powerful SEO strategy. If most of your web pages are visited by GoogleBot on Android, then it makes sense to optimize the performance for the corresponding mobile devices first.

Analysis of the activity of fake robots

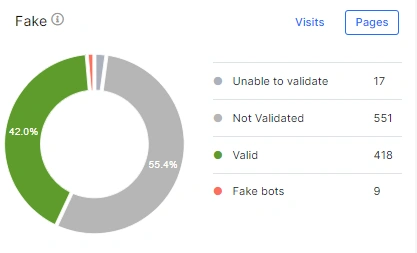

Fake bots constantly visit websites. Unlike verified search robots, they can perform uncontrolled activity and overload your web server. We remind you that verified search robots in the case of a large number of 5xx response codes (these status codes indicate problems with the server) reduce crawling activity.

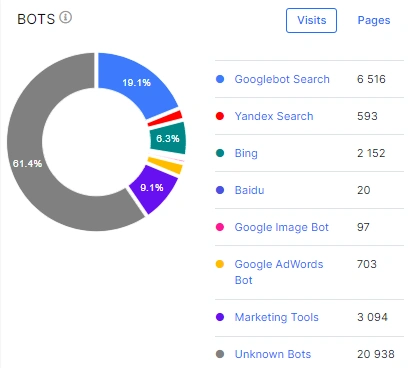

You will be surprised when you see the ratio of activity of fake and valid bots. This can be seen on the “Fake” chart in the “Overview” section.

Here you can also see the dynamics of visits by fake robots during selected period.

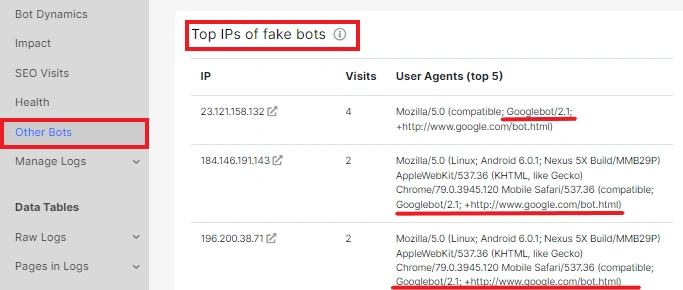

For a detailed analysis of all types of fake bots, go to the “Other bots” report. Here you will find a list of fake bots that visit your website most often.

Using data from this report, you can block the most malicious bots.

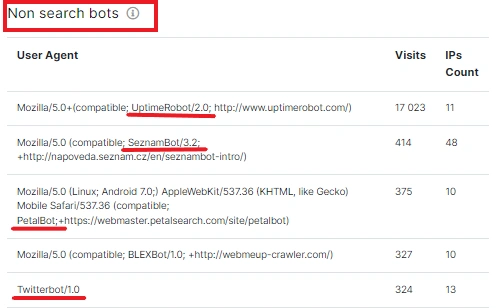

In addition, we list non-search robots (eg, various marketing tools, external safe crawlers, etc.). If a user agent is too active and causing problems, you can also block it based on data from JetOctopus.

If you know what types of search engines visit your site, you can build a powerful strategy based on this data.